Downloads: 1

Switch to unified view

| a/README.md | b/README.md | ||

|---|---|---|---|

| 1 | # Deep Learning-Based Gait Recognition Using Smartphones in the Wild |

1 | # Deep Learning-Based Gait Recognition Using Smartphones in the Wild |

| 2 | 2 | ||

| 3 | This is the source code of Deep learning-based gait recogntion using smartphones in the wild. We provide the dataset and the pretrained model. |

3 | This is the source code of Deep learning-based gait recogntion using smartphones in the wild. We provide the dataset and the pretrained model. |

| 4 | 4 | ||

| 5 | Zou Q, Wang Y, Zhao Y, Wang Q and Li Q, Deep learning-based gait recogntion using smartphones in the wild, IEEE Transactions on Information Forensics and Security, vol. 15, |

5 | Zou Q, Wang Y, Zhao Y, Wang Q and Li Q, Deep learning-based gait recogntion using smartphones in the wild, IEEE Transactions on Information Forensics and Security, vol. 15,

|

| 6 | no. 1, pp. 3197-3212, 2020. |

6 | no. 1, pp. 3197-3212, 2020. |

| 7 | 7 | ||

| 8 | Comparing with other biometrics, gait has advantages of being unobtrusive and difficult to conceal. Inertial sensors such as accelerometer and gyroscope are often used to capture gait dynamics. Nowadays, these inertial sensors have commonly been integrated in smartphones and widely used by average person, which makes it very convenient and inexpensive to collect gait data. In this paper, we study gait recognition using smartphones in the wild. Unlike traditional methods that often require the person to walk along a specified road and/or at a normal walking speed, the proposed method collects inertial gait data under a condition of unconstraint without knowing when, where, and how the user walks. To obtain a high performance of person identification and authentication, deep-learning techniques are presented to learn and model the gait biometrics from the walking data. Specifically, a hybrid deep neural network is proposed for robust gait feature representation, where features in the space domain and in the time domain are successively abstracted by a convolutional neural network and a recurrent neural network. In the experiments, two datasets collected by smartphones on a total of 118 subjects are used for evaluations. Experiments show that the proposed method achieves over 93.5% and 93.7% accuracy in person identification and authentication, respectively. |

8 | Comparing with other biometrics, gait has advantages of being unobtrusive and difficult to conceal. Inertial sensors such as accelerometer and gyroscope are often used to capture gait dynamics. Nowadays, these inertial sensors have commonly been integrated in smartphones and widely used by average person, which makes it very convenient and inexpensive to collect gait data. In this paper, we study gait recognition using smartphones in the wild. Unlike traditional methods that often require the person to walk along a specified road and/or at a normal walking speed, the proposed method collects inertial gait data under a condition of unconstraint without knowing when, where, and how the user walks. To obtain a high performance of person identification and authentication, deep-learning techniques are presented to learn and model the gait biometrics from the walking data. Specifically, a hybrid deep neural network is proposed for robust gait feature representation, where features in the space domain and in the time domain are successively abstracted by a convolutional neural network and a recurrent neural network. In the experiments, two datasets collected by smartphones on a total of 118 subjects are used for evaluations. Experiments show that the proposed method achieves over 93.5% and 93.7% accuracy in person identification and authentication, respectively. |

| 9 | 9 | ||

| 10 | # Networks |

10 | # Networks

|

| 11 | ## Network Architecture for Gait-extraction |

11 | ## Network Architecture for Gait-extraction

|

| 12 |  |

12 |

|

| 13 | ### Network Architecture Details for Gait-extraction |

13 | ### Network Architecture Details for Gait-extraction

|

| 14 |  |

14 |  |

| 15 | 15 | ||

| 16 | ## Network Architecture for Identification |

16 | ## Network Architecture for Identification

|

| 17 |  |

17 |  |

| 18 | 18 | ||

| 19 | ### CNN+LSTM |

19 | ### CNN+LSTM

|

| 20 | It is the network introduced in Fig. 4, which combines the above two networks. The whole network has to be trained from scratch. |

20 | It is the network introduced in Fig. 4, which combines the above two networks. The whole network has to be trained from scratch.

|

| 21 | ### CNNfix+LSTM |

21 | ### CNNfix+LSTM

|

| 22 | It is also the network introduced in Fig. 4. When training, the parameters of CNN are fixed as that in the CNN model that has been trained independently, and the parameters of the LSTM and fully connected layer have to be trained from scratch. |

22 | It is also the network introduced in Fig. 4. When training, the parameters of CNN are fixed as that in the CNN model that has been trained independently, and the parameters of the LSTM and fully connected layer have to be trained from scratch.

|

| 23 | ### CNN+LSTMfix |

23 | ### CNN+LSTMfix

|

| 24 | It is also the network introduced in Fig. 4. When training, the parameters of LSTM are fixed as that in the LSTM model that has been trained independently, and the CNN and fully connection layer have to be trained from scratch. |

24 | It is also the network introduced in Fig. 4. When training, the parameters of LSTM are fixed as that in the LSTM model that has been trained independently, and the CNN and fully connection layer have to be trained from scratch. |

| 25 | 25 | ||

| 26 | ## Network Architecture for Authentication |

26 | ## Network Architecture for Authentication

|

| 27 |  |

27 |

|

| 28 | ### CNN+LSTM horizontal |

28 | ### CNN+LSTM horizontal

|

| 29 | The ‘CNN+LSTM’ network, as have been introduced in Fig. 5, using horizontally aligned data pairs as the input. The weight parameters of CNN are unfixed in the training. |

29 | The ‘CNN+LSTM’ network, as have been introduced in Fig. 5, using horizontally aligned data pairs as the input. The weight parameters of CNN are unfixed in the training.

|

| 30 | ### CNN+LSTM vertical |

30 | ### CNN+LSTM vertical

|

| 31 | The ‘CNN+LSTM’ network using vertically aligned data pairs as the input. The weight parameters of CNN are unfixed in the training. |

31 | The ‘CNN+LSTM’ network using vertically aligned data pairs as the input. The weight parameters of CNN are unfixed in the training.

|

| 32 | ### CNNfix+LSTM horizontal |

32 | ### CNNfix+LSTM horizontal

|

| 33 | The ‘CNNfix+LSTM’ network, as have been introduced in Fig. 5, using horizontally aligned data pairs as the input. The weight parameters of CNN are fixed in the training. |

33 | The ‘CNNfix+LSTM’ network, as have been introduced in Fig. 5, using horizontally aligned data pairs as the input. The weight parameters of CNN are fixed in the training.

|

| 34 | ### CNNfix+LSTM vertical |

34 | ### CNNfix+LSTM vertical

|

| 35 | The ‘CNNfix+LSTM’ network using vertically aligned data pairs as the input. The weight parameters of CNN are fixed in the training. |

35 | The ‘CNNfix+LSTM’ network using vertically aligned data pairs as the input. The weight parameters of CNN are fixed in the training. |

| 36 | 36 | ||

| 37 | ## Codes Download: |

37 | ## Codes Download:

|

| 38 | You can download these codes from the following link: |

38 | You can download these codes from the following link: |

| 39 | 39 | ||

| 40 | https://github.com/qinnzou/Gait-Recognition-Using-Smartphones/tree/master/code |

40 | https://github.com/qinnzou/Gait-Recognition-Using-Smartphones/tree/master/code |

| 41 | 41 | ||

| 42 | # whuGAIT Datasets |

42 | # whuGAIT Datasets

|

| 43 | ## Dataset for Identification & Authentication |

43 | ## Dataset for Identification & Authentication

|

| 44 |  |

44 |

|

| 45 | A number of 118 subjects are involved in the data collection. Among them, 20 subjects collect a larger amount of data in two days, with each has thousands of samples, and 98 subjects collect a smaller amount of data in one day, with each has hundreds of samples. Each data sample contains the 3-axis accelerometer data and the 3-axis gyroscope data. The sampling rate of all sensor data is 50 Hz. According to the different evaluation purposes, we construct six datasets based on the collected data. |

45 | A number of 118 subjects are involved in the data collection. Among them, 20 subjects collect a larger amount of data in two days, with each has thousands of samples, and 98 subjects collect a smaller amount of data in one day, with each has hundreds of samples. Each data sample contains the 3-axis accelerometer data and the 3-axis gyroscope data. The sampling rate of all sensor data is 50 Hz. According to the different evaluation purposes, we construct six datasets based on the collected data.

|

| 46 | ### Dataset #1 |

46 | ### Dataset #1

|

| 47 | This dataset is collected on 118 subjects. Based on the step-segmentation algorithm introduced in Section III-B, the collected gait data can be annotated into steps. Following the findings that two-step data have a good performance in gait recognition [7], we collected gait samples by dividing the gait curve into two continuous steps. Meanwhile, we interpolate a single sample into a fixed length of 128 (using Linear Interpolation function). In order to enlarge the scale of the dataset, we make a one-step overlap between two neighboring samples for all subjects. In this way, a total number of 36,844 gait samples are collected. We use 33,104 samples for training, and the rest 3,740 for test. |

47 | This dataset is collected on 118 subjects. Based on the step-segmentation algorithm introduced in Section III-B, the collected gait data can be annotated into steps. Following the findings that two-step data have a good performance in gait recognition [7], we collected gait samples by dividing the gait curve into two continuous steps. Meanwhile, we interpolate a single sample into a fixed length of 128 (using Linear Interpolation function). In order to enlarge the scale of the dataset, we make a one-step overlap between two neighboring samples for all subjects. In this way, a total number of 36,844 gait samples are collected. We use 33,104 samples for training, and the rest 3,740 for test.

|

| 48 | ### Dataset #2 |

48 | ### Dataset #2

|

| 49 | This dataset is collected on 20 subjects. We also divide the gait curve into two-step samples and interpolate them into the same length of 128. As each subject in this dataset has a much larger amount of data as compared to the that in Dataset #1, we do not make overlap between the samples. Finally, a total number of 49,275 samples are collected, in which 44,339 samples are used for training, and the rest 4,936 for test. |

49 | This dataset is collected on 20 subjects. We also divide the gait curve into two-step samples and interpolate them into the same length of 128. As each subject in this dataset has a much larger amount of data as compared to the that in Dataset #1, we do not make overlap between the samples. Finally, a total number of 49,275 samples are collected, in which 44,339 samples are used for training, and the rest 4,936 for test.

|

| 50 | ### Dataset #3 |

50 | ### Dataset #3

|

| 51 | This dataset is collected on the same 118 subjects as in Dataset #1. Different from Dataset #1, we divide the gait curve by using a fixed time length, instead of a step length. Exactly, we collect a sample with a time interval of 2.56 seconds. While the frequency of data collection is 50Hz, the length of each sample is also 128. Also, we make an overlap of 1.28 seconds to enlarge the dataset. A total number of 29,274 samples are collected, in which 26,283 samples are used for training, and the rest 2,991 for test. |

51 | This dataset is collected on the same 118 subjects as in Dataset #1. Different from Dataset #1, we divide the gait curve by using a fixed time length, instead of a step length. Exactly, we collect a sample with a time interval of 2.56 seconds. While the frequency of data collection is 50Hz, the length of each sample is also 128. Also, we make an overlap of 1.28 seconds to enlarge the dataset. A total number of 29,274 samples are collected, in which 26,283 samples are used for training, and the rest 2,991 for test.

|

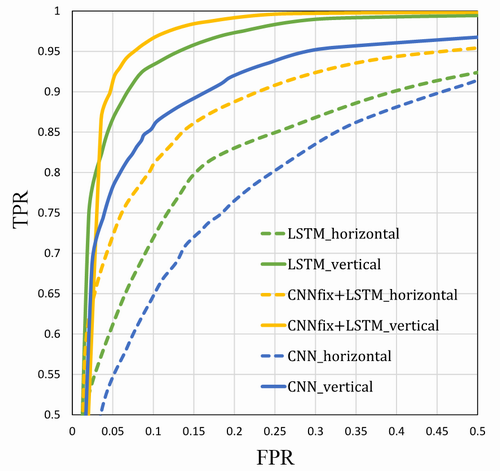

| 52 | ### Dataset #4 |

52 | ### Dataset #4

|

| 53 | This dataset is collected on 20 subjects. We also divide the gait curve in an interval of 2.56 seconds. We make no overlap between the samples. Finally, a total number of 39,314 samples are collected, in which 35,373 samples are used for training, and the rest 3,941 for test. |

53 | This dataset is collected on 20 subjects. We also divide the gait curve in an interval of 2.56 seconds. We make no overlap between the samples. Finally, a total number of 39,314 samples are collected, in which 35,373 samples are used for training, and the rest 3,941 for test.

|

| 54 | ### Dataset #5 |

54 | ### Dataset #5

|

| 55 | This dataset is used for authentication. It contains 74,142 authentication samples of 118 subjects, where the training set is constructed on 98 subjects and the test set is constructed on the other 20 subjects. There are 66,542 samples and 7,600 samples for training and test, respectively. Each authentication sample contains a pair of data sample that are from two different subjects or one same subject. The data sample consists of a 2-step acceleration and gyroscopic data, which are interpolated in the way as described in Dataset #1 and Dataset #2. The two data samples are horizontally aligned to create an authentication sample. |

55 | This dataset is used for authentication. It contains 74,142 authentication samples of 118 subjects, where the training set is constructed on 98 subjects and the test set is constructed on the other 20 subjects. There are 66,542 samples and 7,600 samples for training and test, respectively. Each authentication sample contains a pair of data sample that are from two different subjects or one same subject. The data sample consists of a 2-step acceleration and gyroscopic data, which are interpolated in the way as described in Dataset #1 and Dataset #2. The two data samples are horizontally aligned to create an authentication sample.

|

| 56 | ### Dataset #6 |

56 | ### Dataset #6

|

| 57 | This dataset is also used for authentication. The authentication samples are constructed as the same as in Dataset #5. The only difference is that, in authentication sample construction, two data samples from two subjects are vertically aligned instead of horizontally aligned. |

57 | This dataset is also used for authentication. The authentication samples are constructed as the same as in Dataset #5. The only difference is that, in authentication sample construction, two data samples from two subjects are vertically aligned instead of horizontally aligned. |

| 58 | 58 | ||

| 59 | 59 | ||

| 60 | ## Datasets for Gait-Extraction |

60 | ## Datasets for Gait-Extraction

|

| 61 |  |

61 |  |

| 62 | ### Dataset #7 |

62 | ### Dataset #7

|

| 63 | We took 577 samples from 10 subjects, with |

63 | We took 577 samples from 10 subjects, with

|

| 64 | data shape of 6×1024. 519 of them were used for training |

64 | data shape of 6×1024. 519 of them were used for training

|

| 65 | and 58 were used for testing. Both the training and testing |

65 | and 58 were used for testing. Both the training and testing

|

| 66 | datasets have data from these 10 subjects. There is no |

66 | datasets have data from these 10 subjects. There is no

|

| 67 | overlap between the training sample and the test sample. |

67 | overlap between the training sample and the test sample.

|

| 68 | ### Dataset #8 |

68 | ### Dataset #8

|

| 69 | We took 1,354 samples from 118 subjects, with |

69 | We took 1,354 samples from 118 subjects, with

|

| 70 | data shape of 6×1024. In order to make the training and |

70 | data shape of 6×1024. In order to make the training and

|

| 71 | test data come from different subjects, we use 1022 samples |

71 | test data come from different subjects, we use 1022 samples

|

| 72 | from 20 subjects as training data and 332 samples from |

72 | from 20 subjects as training data and 332 samples from

|

| 73 | other 98 subjects for testing. |

73 | other 98 subjects for testing.

|

| 74 | ## Datasets Download: |

74 | ## Datasets Download:

|

| 75 | You can download these datasets from the following link: |

75 | You can download these datasets from the following link: |

| 76 | 76 | ||

| 77 | https://drive.google.com/drive/folders/1KOm-zROeOZH3e2tqYUpHAvIaBZSJGFm_?usp=sharing |

77 | https://drive.google.com/drive/folders/1KOm-zROeOZH3e2tqYUpHAvIaBZSJGFm_?usp=sharing |

| 78 | 78 | ||

| 79 | or https://1drv.ms/f/s!AittnGm6vRKLyh3yWS7XaXfyUNQp |

79 | or https://1drv.ms/f/s!AittnGm6vRKLyh3yWS7XaXfyUNQp |

| 80 | 80 | ||

| 81 | or BaiduYun: |

81 | or BaiduYun:

|

| 82 | https://pan.baidu.com/s/18mYLZspZT39G7TyOuQC5Aw |

82 | https://pan.baidu.com/s/18mYLZspZT39G7TyOuQC5Aw

|

| 83 | Passcodes:mfz0 |

83 | Passcodes:mfz0 |

| 84 | 84 | ||

| 85 | For the classificaiton and authentication datasets constructed based on OU-ISIR used in our paper, we shared it at |

85 | For the classificaiton and authentication datasets constructed based on OU-ISIR used in our paper, we shared it at

|

| 86 | Link:https://pan.baidu.com/s/1Q1MVM6Y53yr6WicG0cw8Vw |

86 | Link:https://pan.baidu.com/s/1Q1MVM6Y53yr6WicG0cw8Vw

|

| 87 | Passcodes:v1ls |

87 | Passcodes:v1ls |

| 88 | 88 | ||

| 89 | 89 | ||

| 90 | We also provide the orignal raw data of the dataset (98 subjects and 20 subjects) : |

90 | We also provide the orignal raw data of the dataset (98 subjects and 20 subjects) :

|

| 91 | link: https://pan.baidu.com/s/1aMiftAukgAuzoZftwJZiDw |

91 | link: https://pan.baidu.com/s/1aMiftAukgAuzoZftwJZiDw

|

| 92 | code:140w |

92 | code:140w |

| 93 | 93 | ||

| 94 | 94 | ||

| 95 | # Set up |

95 | # Set up

|

| 96 | ## Requirements |

96 | ## Requirements

|

| 97 | PyTorch 0.4.0 |

97 | PyTorch 0.4.0

|

| 98 | Python 3.6 |

98 | Python 3.6

|

| 99 | CUDA 8.0 |

99 | CUDA 8.0

|

| 100 | We run on the Intel Core Xeon E5-2630@2.3GHz, 64GB RAM and two GeForce GTX TITAN-X GPUs. |

100 | We run on the Intel Core Xeon E5-2630@2.3GHz, 64GB RAM and two GeForce GTX TITAN-X GPUs. |

| 101 | 101 | ||

| 102 | # Results |

102 | # Results

|

| 103 | ## Results for Gait-Extraction |

103 | ## Results for Gait-Extraction

|

| 104 |  |

104 |  |

| 105 | 105 | ||

| 106 | Here is four examples of walking data extraction results, where blue represents walking data, green represents non-walking data, and red represents unclassified data. |

106 | Here is four examples of walking data extraction results, where blue represents walking data, green represents non-walking data, and red represents unclassified data.

|

| 107 | In dataset #7, our method achieved an accuracy of 90.22%, |

107 | In dataset #7, our method achieved an accuracy of 90.22%,

|

| 108 | which shows that our method is effective for segmentation of |

108 | which shows that our method is effective for segmentation of

|

| 109 | walking data and non-walking data. Further, in the dataset #8 |

109 | walking data and non-walking data. Further, in the dataset #8

|

| 110 | where the training data and the testing data are respectively |

110 | where the training data and the testing data are respectively

|

| 111 | from different subjects, our method achieved an accuracy of |

111 | from different subjects, our method achieved an accuracy of

|

| 112 | 85.57%, which shows that our method has good robustness on |

112 | 85.57%, which shows that our method has good robustness on

|

| 113 | datasets that are not involved in training. |

113 | datasets that are not involved in training. |

| 114 | 114 | ||

| 115 | ## Results for Identification |

115 | ## Results for Identification

|

| 116 |  |

116 |  |

| 117 | 117 | ||

| 118 | Performance of different LSTM networks. The classification experiments are conducted on 118 subjects. For each group of results, the left, middle, and right bars correspond to the results of the single-layer LSTM (SL-LSTM), the bi-directional LSTM (Bi-LSTM) and the double-layer LSTM (DL-LSTM), respectively. |

118 | Performance of different LSTM networks. The classification experiments are conducted on 118 subjects. For each group of results, the left, middle, and right bars correspond to the results of the single-layer LSTM (SL-LSTM), the bi-directional LSTM (Bi-LSTM) and the double-layer LSTM (DL-LSTM), respectively. |

| 119 | 119 | ||

| 120 |  |

120 |  |

| 121 | 121 | ||

| 122 | Here is the classification results using Dataset #1 and Dataset #1. |

122 | Here is the classification results using Dataset #1 and Dataset #1. |

| 123 | 123 | ||

| 124 | ## Results for Authentication |

124 | ## Results for Authentication

|

| 125 |  |

125 |  |

| 126 | 126 | ||

| 127 | Here shows the authentication results obtained by |

127 | Here shows the authentication results obtained by

|

| 128 | four deep-learning-based methods, i.e., LSTM, CNN, ‘CN- |

128 | four deep-learning-based methods, i.e., LSTM, CNN, ‘CN-

|

| 129 | N+LSTM’, and ‘CNNfix+LSTM’, and three traditional meth- |

129 | N+LSTM’, and ‘CNNfix+LSTM’, and three traditional meth-

|

| 130 | ods, i.e., EigenGait, Wavelet and Fourier. Note that, the Dataset #5 and Dataset #6 are constructed on the same 118 subjects and the same samples. The only difference is that, the input data have been aligned in two different manners. Exactly, the samples are aligned in horizontal for Dataset #5 and in vertical for Dataset #6. |

130 | ods, i.e., EigenGait, Wavelet and Fourier. Note that, the Dataset #5 and Dataset #6 are constructed on the same 118 subjects and the same samples. The only difference is that, the input data have been aligned in two different manners. Exactly, the samples are aligned in horizontal for Dataset #5 and in vertical for Dataset #6. |

| 131 | 131 | ||

| 132 |  |

132 |  |

| 133 | 133 | ||

| 134 | 134 | ||

| 135 | # Reference |

135 | # Reference

|

| 136 | ``` |

136 | ```

|

| 137 | @article{zou2020gait, |

137 | @article{zou2020gait,

|

| 138 | title={Deep learning-based gait recogntion using smartphones in the wild} |

138 | title={Deep learning-based gait recogntion using smartphones in the wild}

|

| 139 | author={Q. Zou and Y. Wang and Y. Zhao and Q. Wang and Q. Li}, |

139 | author={Q. Zou and Y. Wang and Y. Zhao and Q. Wang and Q. Li},

|

| 140 | journal={IEEE Transactions on Information Forensics and Security}, |

140 | journal={IEEE Transactions on Information Forensics and Security},

|

| 141 | volume={15}, |

141 | volume={15},

|

| 142 | number={1}, |

142 | number={1},

|

| 143 | pages={3197--3212}, |

143 | pages={3197--3212},

|

| 144 | year={2020}, |

144 | year={2020},

|

| 145 | } |

145 | }

|

| 146 | ``` |

146 | ``` |

| 147 | 147 | ||

| 148 | # Copy Right: |

148 | # Copy Right:

|

| 149 | This dataset was collected for academic research. |

149 | This dataset was collected for academic research.

|

| 150 | # Contact: |

150 | # Contact:

|

| 151 | For any problem about this dataset, please contact Dr. Qin Zou (qzou@whu.edu.cn). |

151 | For any problem about this dataset, please contact Dr. Qin Zou (qzou@whu.edu.cn).

|

Datasets

Datasets

Models

Models